By Dan Woods, Founder and CEO of Socotra

I’m an engineer.

Engineering was my education, my first job, and my favorite hobby long before it was my career. I’ve spent my working life building enterprise software, starting with heavy contributions to the Palantir 1.0 codebase in 2006.

Today, AI can write code. People who’ve never made it past “Hello World” are now creating thousands of apps by merely describing their ideas.

Two things are undeniable:

- AI-generated code has unlocked enormous productivity and value.

- The prospect of using AI-generated code in production is terrifying!

Socotra has closely monitored these developments. We’ve used AI-generated code to improve our product and accelerate deployments for our customers—all without sacrificing quality and reliability. I want to share our learnings and best practices with developers across the insurance industry, in hopes that safe productivity gains will help move the industry forward.

Why AI Coding Creates Anxiety for Insurers

A lot of that anxiety comes from so-called “vibe coding.” Vibe coding means using AI to build software by merely describing requirements, without worrying much about structure, testing, or long-term maintenance. Vibe coding can feel reckless (because it is). As if “moving fast and breaking things” at human speed wasn’t scary enough; now we can break things with lightspeed automation. Use this in production? No way.

AI coding has clear potential for productivity gain, but only if insurers address two obstacles:

Obstacle 1: AI-generated code needs to be safe enough for production use

Obstacle 2: AI-generated code must work with existing systems

Without addressing these, AI-generated code is limited to standalone prototyping—which does indeed have value. At Socotra, we’ve long used AI to imagine new portals, product configurations, and workflows. AI-assisted prototyping is fast (and fun, frankly), but still represents less than 3% of the development work we actually do.

However, the real opportunity comes from moving past the two obstacles listed above. Over time, we’ve developed practical ways to make AI-generated code production-ready. We’ve found that AI makes development roughly twice as fast, using half the engineering resources—a 75% cost savings.

We’re often asked how we do this, and I’m going to share it here.

Want to Try “Vibe Coding” in One Hour?

It’s easy to get started. Tools like Claude Code, Cursor, and GitHub Copilot let you spin up working software in minutes. You can sketch ideas, explore workflows, and try things that would have taken days or weeks for experienced engineers.

I think everyone should try it at least once. Here are some online tutorials my non-technical team members have used to build:

Warning: this can be surprisingly addictive, especially for people who’ve never experienced the joy of coding!

Solving Obstacle 1:

Making AI-Generated Code Safe for Production

An LLM can write a research report for me in seconds, but I would never publish the result as is. Code is no different.

AI-generated code should be treated like code written by a very junior engineer. It should never go directly into production. Frankly, no code—whether written by a human or AI—should go directly into production!

Here are some best practices for making it safe.

Best Practices to Make AI-Generated Code Production-Ready:

![]() Engineer Code Review

Engineer Code Review

Experienced engineers need to review AI-generated code, as they would review a junior engineer’s code before putting it into production.

![]() Unit Tests

Unit Tests

Unit tests are highly valuable, but it’s dangerous to let AI generate these. If the AI misunderstands your requirements and generates a bug, it’ll probably misunderstand your requirements for the test too. The AI is likely to write unit tests that actually pass only if the bug is there!

![]() Integration Tests

Integration Tests

Integration tests validate the contracts between components. Most production failures occur here, not inside isolated functions. AI often models these complex interactions too simply: over-mocking dependencies, testing only happy paths, and ignoring real failure modes. Integration tests require judgment about how the systems are intended to collaborate. AI can generate integration tests, but you need to review the tests thoroughly and hand-write any test cases that AI missed.

![]() End-to-End Tests

End-to-End Tests

I have some good news: here is a place where AI works great! Use AI to specify tests for overall user-level workflows, which will only pass if the entire system is working as expected.

![]() Static and Dynamic Code Analysis

Static and Dynamic Code Analysis

This is an easy one. All the tools that review engineers’ code quality can also review AI-generated code. There are many popular tools such as SonarQube, CodeQL, and OWASP ZAP. Choose some good ones and use them.

![]() Code-Generation Tool Selection

Code-Generation Tool Selection

The best AI tool to use is a matter of opinion, and will likely change faster than your browser can refresh this blog post! At the moment, popular code-generation tools include Codex from ChatGPT, ClaudeCode from Anthropic, and Cursor. Keep watch on the latest developments, and be prepared to change when something better comes. Most importantly, only use AI tools approved by your security team.

![]() Security Team Collaboration

Security Team Collaboration

Every insurer has a security team. Socotra also has a full security team for the protection of our customers and to maintain our ISO 27001 certification. We recommend all developers work closely with their security teams to keep up with AI’s rapidly evolving capabilities and vulnerabilities.

Solving Obstacle 2:

Integrating AI-Generated Code with Other Systems

We’ve now covered best practices for high-velocity, production-ready AI-generated code. That’s great if it’s all you need, but in the enterprise code doesn’t operate in isolation. Most insurance software (certainly most insurance core software) wasn’t engineered with AI in mind, and that can drastically reduce the benefits of AI for the enterprise.

Here are the things that make software compatible with AI-generated code. They are a must-have list when selecting enterprise software vendors. Since we designed Socotra with AI in mind, it’s no surprise that Socotra was built according to all of these principles.

Selecting AI-Compatible Enterprise Software

Look For Modular Design

Remember when I said AI-generated code should be treated like code written by a very junior engineer? If you give a junior engineer the keys to your whole code base, you can expect an intractable amount of code reviewing before you can ship it. They need guardrails, and so does AI.

This is why modularity (with well-defined contracts!) is highly important. If the architecture is divided into well-defined plugins, configurations, and integrations and all the connection points use open standards that are well documented, then you can give AI these small components and reasonably review and test each one.

Because Socotra is highly modular, we’re able to make great use of AI. For example, AI can modify an insurance product’s underwriting rules or billing calculation. In each scenario, the code can be efficiently tested and deployed, without danger of breaking other parts of the system.

Look For Open Languages and Formats

Code-generating AIs are trained on all mainstream programming languages and file formats. They all know Java, Python, and JavaScript. They also know JSON, CSV, and RESTful APIs.

Unfortunately for insurers, a lot of insurance core platforms have invented proprietary languages and file formats, which no LLMs are trained on. Insurers have great difficulty trying to use AI-generated code around these systems.

Socotra always had this consideration in mind and has only ever implemented widely-used languages and file formats, such as JSON and CSV for configuration and Java for plugins.

Look For Documentation

Whether it be APIs, configuration syntax, or system architectures, engineers hate when they have to ask vendors for information that should be provided in documentation. Whereas humans have the privilege of calling support or emailing other engineers, AI gets stuck.

Insurers today need to look at their vendors’ documentation with a very critical eye. In the past, poor documentation was acceptable when supplemented with weeks of training and ongoing access to experts. This model is annoying with human developers, but it totally breaks with AI-generated code.

At Socotra, documentation is a pillar of our engineering core values and always has been. AI quickly and easily uses Socotra documentation to build integrations, configure new insurance products, develop customer portals, and more.

Look For Data-Fluent Systems

From report generation to business intelligence to data lake integrations, many use cases for AI-generated code deal with data. Your AI-generated software will be no better with data than the enterprise software it relies on.

Data fluency for enterprise software means:

- Strong APIs for accessing individual records

- Included data lake for mass queries

- Webhooks and delta file exports for keeping external systems updated in real time

- High-speed server responses for all data retrieval operations

- High uptime so data is always available

- Real-time data consistency across the system

If your enterprise platform doesn’t have strong APIs, then your AI will struggle to write code to interact with it. If it can’t support mass queries, then your AI can’t generate reports for you. If the system is slow, the code your AI generates will be slow. If the system has frequent downtime…you get the idea.

If the flow of data around your enterprise is so complicated and asynchronous that your own engineers struggle to add new capabilities, then your AI-generated code will struggle too.

Look For MCP Servers

Model Context Protocol (MCP) is currently the most popular standard for connecting AI with other software platforms. A modern enterprise software platform has user interfaces for human interaction, APIs for external software interaction, and MCP servers for external AI interaction.

This is admittedly off-topic when evaluating enterprise software’s ability to integrate well with AI-generated code, but it’s absolutely necessary for any enterprise software to be considered AI-compatible. With an MCP server, the latest LLMs require no code at all to connect with enterprise platforms. This is why MCP is supported by such enterprise software giants as Salesforce, Snowflake, Atlassian, Hubspot, and (of course) Socotra.

Socotra’s MCP server is fully documented with 10-minute instructions for connecting it with Claude or Cursor.

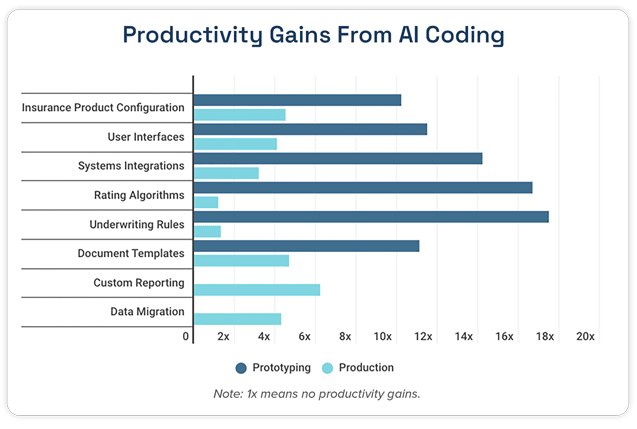

Bottom Line:

Productivity Gains

These are real productivity gains Socotra engineers experience on average for each of these engineering tasks. For the reasons outlined under “Solving Obstacle 2” above, almost none of these productivity gains are achievable when working with other insurance cores, because they were not made to work with AI.

These productivity gains are for developers, who can implement these use cases with or without AI. For non-technical users, gains are infinite since they had no other way to generate code previously. However, code generated by someone who doesn’t know how to review it is very dangerous for production. It’s possible in some cases with the right guardrails, and that might be the topic of a future blog post!

Also, when software contains dense business logic with many edge cases, AI can very quickly generate code from English-language requirements, but the testing burden grows quickly. Self-testing AI logic always passes. Using a different AI for testing helps a little, but production tests must be carefully reviewed by an experienced engineer. This is why prototyping and production have wildly different productivity gains for rating algorithms and underwriting rules. Also, finalizing requirements for heavy logic consumes more time than implementation, whether AI is used or not.

What’s Next

There is clear and massive power in using AI to help generate code and other digital assets, and also clear danger. I’ve described our best practices for maximizing productivity gain with no sacrifice in quality or safety.

Since AI capabilities are developing very rapidly, I’ll conclude with a few predictions about AI-assisted development in the future:

![]() Expected Improvements to AI-Assisted Development

Expected Improvements to AI-Assisted Development

AI today is capable of making small, isolated changes, even in moderately large codebases. In the next couple years, AI will be capable of increasingly complex code changes—moving towards entire, moderately sophisticated features. AI will also get better at explaining abstractions and data model changes, including benefits and tradeoffs, to human engineers.

Simultaneously, switching costs between enterprise software platforms will plummet. The cost of moving to Socotra has already dropped by 50% because of AI. Switching costs will continue dropping for AI-compatible platforms (See “Solving Obstacle 2” above).

Thirdly, there will soon emerge situations in which truly non-technical business users can safely modify production code. It’s even possible today, in just the right situations with the right guardrails.

![]() Continued Limitations Expected With AI-Assisted Development

Continued Limitations Expected With AI-Assisted Development

Human engineers struggle when working on legacy codebases that lack modularity, coherence, and documentation; and AI will struggle with the same. The only difference is AI will make mistakes or give up faster. Also, merely translating from old to new programming languages does not improve maintainability.

Secondly, it’ll be a very long time until AI can architect complex systems. Architecting a complex system is a strategic endeavor, involving considerations such as:

- How might business needs change in the future?

- How is the organization structured?

- Which decisions are management likely to make in the future?

- Which new technologies will be available in the future?

- Which technologies today will be avoided or discontinued in the future?

- What business information might be requested tomorrow that isn’t used today?

- What is the relative value of each feature, and why?

When AI can answer such strategic questions better than humans, we’ll be living in the age of AI-run companies (and I’ll be living in the age of looking for a new job). It may happen someday, but not soon.

Finally, it’ll be a long time until AI is more trusted than human engineers. Whether due to actual AI limitations or irrational human bias, the result remains: a human executive expects to hear another human vouch that all code has been reviewed and tested. Until humans change, AI will be “mere” tooling. Technology advances fast, but humans don’t.

Conclusion

AI-assisted development is here, and it’s already too powerful for insurers to ignore. Like any powerful tool, it can be immensely valuable or immensely dangerous. Insurers today can safely realize 75% reductions in their development costs and 2x increase in their IT velocity if they use best practices and work with AI-compatible platforms.

I’m interested in meeting insurers seeking AI-compatible technologies and ways to safely harness the power of AI-assisted development. As AI continues its rapid pace of development, smart platform decisions today will amplify into massive future advantages.

About Dan Woods

Dan Woods is founder and CEO of Socotra, the AI-native insurance core platform. He earned a master’s degree in computer science from Stanford, where he concentrated in AI and published highly-cited AI research. Dan was the third backend engineer at Palantir, where he composed Palantir’s first AI functionality. He later led Palantir’s partnerships and ran several deployments. Dan then founded Socotra to bring advanced but proven technology to the insurance industry.

The consistent thread of Dan’s career has been the intersection of AI and high-scale enterprise platforms. Socotra is therefore built with an unparalleled capability to scale and support AI in production.